Before we get into how artificial intelligence (AI) and machine learning (ML) are used by both the good guys and the bad guys in cybersecurity, lets define the terms and discuss their capabilities. Defining the terms is important because most folks use the terms interchangeably or incorrectly to describe their use.

On a fundamental level, artificial intelligence (AI) security solutions are programmed to identify safe versus malicious behaviors by cross comparing the behaviors and system traffic of users across an organization or environment to those in a similar environment. Typically, in cybersecurity, AI works most effectively when a base case is created for user behavior and any change from the base line of behavior will alert IT security to the change. This process of monitoring the change of activity is often referred to as “unsupervised learning” where the system creates patterns without human supervision.

The value of AI is that sophisticated AI cybersecurity tools have the capability to analyze enormous data sets allowing them to develop activity patterns that can quickly flag or alert IT security to potential malicious behavior. As we all know, prior to AI security tools, this was a brutally manual process handled by SOC analysts who often couldn’t identify anomalous behavior until after the breach was successful.

Thus, AI emulates the threat-detection aptitude of its human counterparts. In cybersecurity, AI is also used for automation, triaging, aggregating alerts, sorting through alerts, automating responses, and more. Simply, AI is often used to augment the first level of a SOC analysts’ responsibility of investigating alerts and events.

Similarly, machine learning (ML) detects threats by constantly monitoring the behavior of the network for anomalies. Machine learning engines process massive amounts of data in near real time to discover critical incidents. These techniques allow for the detection of insider threats, unknown malware, and policy violations.

Machine learning can predict malicious websites online to help prevent people from connecting to them. Machine learning analyzes Internet activity to automatically identify attack infrastructures staged for current and emergent threats.

Algorithms can detect new malicious files and malware that is trying to run on systems throughout the environment. It identifies new malicious files and activity based on the attributes and behaviors of malware that has never been seen and doesn’t have an existing signature.

As we suggested earlier, Artificial intelligence (AI) and machine learning (ML) are often used interchangeably, but machine learning is a subset of the broader category of artificial intelligence.

Thus, put in context, AI refers to the general ability of computers to emulate human thought and perform human tasks in real-world environments, while ML refers to the technologies and algorithms that enable systems to identify patterns, make decisions, and improve themselves through experience and data. I’m going to put that in bold below because it is important, and as we said prior, most people do not know the difference between the terms.

AI refers to the general ability of computers to emulate human thought and perform human tasks in real-world environments, while ML refers to the technologies and algorithms that enable systems to identify patterns, make decisions, and improve themselves through experience and data

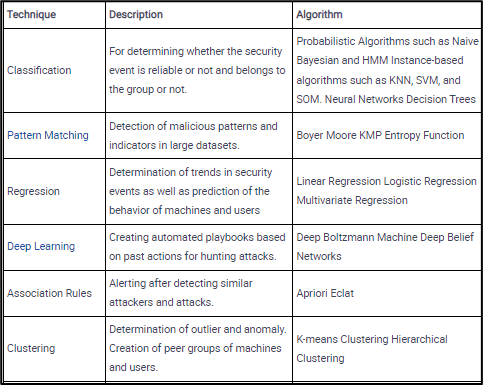

Software developers and computer programmers enable systems to analyze data and create an accurate picture of user and system behavior — basically, they create artificial intelligence systems — by applying tools such as:

Artificial intelligence (AI) has grown increasingly powerful and easy to use. More cybersecurity technologies have embedded AI and machine learning (ML) into their products. As we said, many organizations today do not have enough SOC analysts to capture, analyze, triage, and execute a cyber-attack response fast enough to prevent future breaches.

Organizations are now using AI and ML tools in IT security to empower defenses while hackers break down the client's security protection layers using the same tools. AI-powered ransomware and phishing attacks are becoming common.

As we face unprecedented, sophisticated attacks, AI-based tools for cybersecurity have emerged with great success. These tools can help us reduce our vulnerability to breaches and improve our overall cybersecurity stance.

For example, ML can protect productivity by analyzing suspicious cloud app login activity, detecting location-based anomalies, and conducting IP reputation analysis to identify threats and risks in cloud apps and platforms. In addition, ML can detect malware in encrypted traffic by analyzing encrypted traffic data elements in common network telemetry. Rather than decrypting, machine learning algorithms pinpoint malicious patterns to find threats hidden with encryption.

AI cybersecurity solutions are able to identify, predict, respond to, and learn about potential threats, without depending on human input. AI security tools can:

Machine learning is a pathway to artificial intelligence. This subcategory of AI uses algorithms to automatically learn insights and recognize patterns from data, applying that learning to make increasingly better decisions.

By studying and experimenting with machine learning, programmers test the limits of how much they can improve the perception, cognition, and action of a computer system.

Deep learning, an advanced method of machine learning, goes a step further. Deep learning models use large neural networks — networks that function like a human brain to logically analyze data — to learn complex patterns and make predictions independent of human input.

As we suggested in our Digital Transformation, 4-part blog series, digital transformation is happening so quickly that IT security is often an afterthought. Digital transformation strategies have created new attack surfaces requiring SecOps and IR teams to adopt new adaptive control capabilities. In addition, business model transformation is changing everything from how we manage our daily lives to how we run companies.

Digital transformation is the process by which companies embed technologies across their businesses to drive fundamental change

Consumers are now expecting what they want almost instantaneously because service providers have the technology to provide them with it. Despite the increase in malware attacks and other attacks, organizations are forced to press forward with their transformation strategy or be left behind by their competition.

“Supporting digital transformation initiatives and a remote work model has led to a dramatic increase in the exposed edges of the network,” says Bob Turner, field CISO of higher education at Fortinet. “At the same time, malware, ransomware and other threats continue to challenge organizations by exploiting inconsistently protected endpoint devices.”

Artificial intelligence and machine learning need massive amounts of data to be useful in cybersecurity. Processing huge amounts of data while feeding content into machine learning classifiers is one of many goals for AI to become a critical element for organizations.

Without big data, AI and ML have little value in cybersecurity. Sophisticated advanced attacks generate large amounts of data. If an AL and ML engine is not configured optimally, the system will produce more false positives for SecOps.

Knowing the goal and purpose of AI and ML for security operations is critical for organizations. The organization's lack of precise alignment to best leverage the AI ability will lead to undeveloped and unitized investment. Organizations choosing to invest in AI and ML know the initial cost, and ongoing investment in technology and human capital expertise is critical to getting the most out of the platform.

Organizations making a critical investment in AL and ML should be fully aware of the acceptance of the risk they are introducing. AI-based security tools are up-and-coming for processing data and analyzing better ways for rudimentary processes; AI also comes with the inherent risk of process manipulation. Artificial intelligence systems are susceptible to attacks like any other system.

Adversarial attacks leverage the power of AI for persistent threats against corporate networks. Much like companies investing in ways to data flows into usage data sets for machine learning classifiers to help predict the next cyber-attack, hackers also use AL and ML to help determine what should be the most effective to attack their targets.

Machine learning systems are different from traditional computer programs because they don't need to be updated after installation. Therefore, they're vulnerable to attack even if they aren't connected to the Internet. And unlike traditional security flaws, which usually need physical access to the device, machine learning weaknesses can exist without any connection to the outside world.

As a real-world example, ML algorithms can be implemented within network traffic analysis to detect network-based attacks such as DDoS attacks. For example, a trained algorithm can detect the large volume of traffic a server receives during a DDoS attack. In addition, the algorithms can also discover the attack vector or the attack type, like TCP Flood, which enables SOC teams to take precautions against upcoming cyber threats in the future.

One more real-world example is ML-based solutions can be trained to detect anomalies in HTTP requests and create alarms in case of an attack. They can also be trained to classify the type of attacks (SQL injection, XSS attack) and detect attack vectors.

AI and ML are becoming increasingly critical for cybersecurity because these technologies learn throughout their lifetimes to recognize new kinds of threats. They draw upon histories of user behavior to create profiles of individuals, organizations, and systems, which allow them to spot deviations from established norms.

Conventional security tools use signatures or indicators of compromise (IOC) to identify threats. This technique can easily identify previously discovered threats. However, signature-based tools cannot detect threats that have not been discovered yet. In fact, they can identify only about 90 percent of threats.

Artificial intelligence can increase the detection rate of traditional techniques up to 95 percent. The problem is that you can get multiple false positives. The best option is a combination of AI and traditional methods. This merger between the conventional and innovative can increase detection rates by up to 100 percent, thus minimizing false positives.

In addition, as more organizations have discovered through their incident response activities, including the lessons learned phase, cybersecurity breaches, especially early deviations happen six months to a year before the attack. These "breadcrumbs" appear in the log files or live SMNP alerting into the SIEM. A deviation found with the CASB DLP, along with an additional crumb found in the endpoint security solution today now correlates with the extended detection and response (XDR) systems. By capturing telemetry from several hosts and adaptive controls, XDR, through the power of ML, can auto-correlate these events into a kill chain report or align with the MITRE attack framework for additional threat hunting and incident response capabilities.

With XDR capabilities, organizations can leverage the power of AI and ML. However, what if the breadcrumbs were a ruse? What if the breadcrumbs set by hackers were a false flag attack, only attempting to manipulate the ML classifier and automation response?

This reality is essential for organizations to realize when considering the value of AI and ML. Data manipulation by hackers could happen months or even a year before the attack. By sending in dry run bread crumbs, the hacker can measure the method and response of ML and Security Orchestration, Automation, and Response or (SOAR) capabilities.

How long did it take for the organization to recognize, identify, eradicate, and restore a system after the initial cyber-attack? Hackers probing and testing the client's ML capabilities will continue this reconnaissance a month before the attack is launched.

Over the past few years, artificial intelligence (AI) has emerged as an essential tool for augmenting the efforts made by human information security professionals. Because humans cannot scale up to adequately secure the ever-growing attack surface of the modern organization, AI offers much-needed analysis and detection capabilities that cybersecurity professionals can use to reduce risk and improve security posture.

Human error in managing AI systems does exist. Many potential threats happen across the applications of machine learning and AI tools. Accurate data sets are critical for organizations to fully realize the value of AI and ML for cybersecurity and business operations.

AI and ML can offer enhanced protection, without increasing staff or putting a major dent in most organization’s budget. Because machine learning is advanced technology, it is not inexpensive. However, one dollar spent on the preventive response capabilities of any organization is going to equal five or six dollars spent dealing with a breach. It’s definitely more expensive to have to deal with a fire than to buy a fire extinguisher – I heard this in a cyber conference recently and though it was a great analogy.

To be successful in nearly any industry, organizations must be able to transform their data into actionable insights. Artificial Intelligence and machine learning provides organizations the advantage of automating a variety of manual processes involving data and decision making.

By incorporating artificial intelligence and machine learning into their systems and strategic plans, leaders can understand and act on data-driven insights with far greater speed and efficiency.